The Data Thieves Who Built the Kingdom

Anthropic just called three Chinese AI companies thieves.

What they neglected to mention is that they built Claude the same way.

This week, Anthropic's security team published a detailed report accusing DeepSeek, Moonshot AI, and MiniMax of running 24,000 fraudulent accounts and executing 16 million Claude API conversations through a network of proxy servers the security team described — with impressive nomenclature — as a hydra cluster architecture. The complaint is real, the deception is documented, and Anthropic's anger is understandable.

But Anthropic's complaint isn't just about the fake accounts. It's about distillation itself — the idea that using Claude's outputs to train a competing model is, by its nature, a form of theft.

That's the claim worth examining. Because if distillation is theft, the entire AI industry has a problem. Starting with the people making the accusation.

What Actually Happened

Let's be precise, because precision matters here.

DeepSeek, Moonshot AI, and MiniMax are AI companies building open-source models. They signed up for API access to Claude. They paid for it — real money, per token, at Anthropic's published prices. And they used the outputs of those paid interactions as training data for their own models. A process called distillation: teaching a smaller model to replicate the behaviour of a larger one by learning from its responses.

The fake accounts and the hydra proxy network? That's a genuine terms of service violation. Anthropic explicitly prohibits using its outputs to train competing models. The deception — 24,000 fraudulent identities, a proxy architecture specifically designed to evade detection — is indefensible. That part of Anthropic's complaint stands.

But Anthropic conflates two separate things in its report: the deceptive method, and the distillation itself. The moral weight of "24,000 fake accounts" is being used to carry the legal argument that any distillation from Claude is theft — regardless of whether it's done honestly.

That's where it gets complicated.

The Data Flow Nobody Draws

Here is the actual sequence of events that produced Claude.

Anthropic scraped the open internet. Books, articles, forum posts, creative writing, academic papers, code repositories — the accumulated intellectual output of millions of people who were not asked, not notified, and not paid. This is not a conspiracy theory or a contested claim. It is how every frontier AI model was built, and Anthropic has never seriously disputed it.

From that data, Anthropic built Claude. A closed, proprietary model that Anthropic owns entirely and licenses commercially.

DeepSeek, Moonshot, and MiniMax then paid Anthropic for API access. They used Claude's outputs — outputs generated from data Anthropic didn't pay for — to train models they released as open source. Free. For anyone. Forever.

So the complete data flow looks like this:

Open internet creators produce content. Anthropic takes it, unpaid. Builds a closed model. Sells access. Paid customers use those outputs to build open-source products the whole world can use for free.

Anthropic is the only closed, proprietary link in that entire chain. The net transfer of value flows from open creators, through a closed commercial model, toward open-source outputs that lower the cost of AI for everyone. And the company sitting in the middle — the one that captured and monetised everyone else's creative output — is the one calling someone else a thief.

There's one more detail. Those 16 million conversations Anthropic describes as stolen property? Anthropic's own terms of service permit the company to use user conversations to train future versions of Claude. Anthropic was paid for the API access. It will train its next model on the conversations it was paid to facilitate. And it is simultaneously calling the paying customers who generated those conversations criminals for doing, in sequence, exactly what Anthropic does in parallel.

To be precise: Anthropic took data it didn't pay for, built a closed product, sold access to it, will train its next model on the conversations it was paid to host, and is now calling the people who paid it thieves for doing the same thing.

The legal term for this is chutzpah. The technical term is having your cake, eating it, and then filing a police report about the cake.

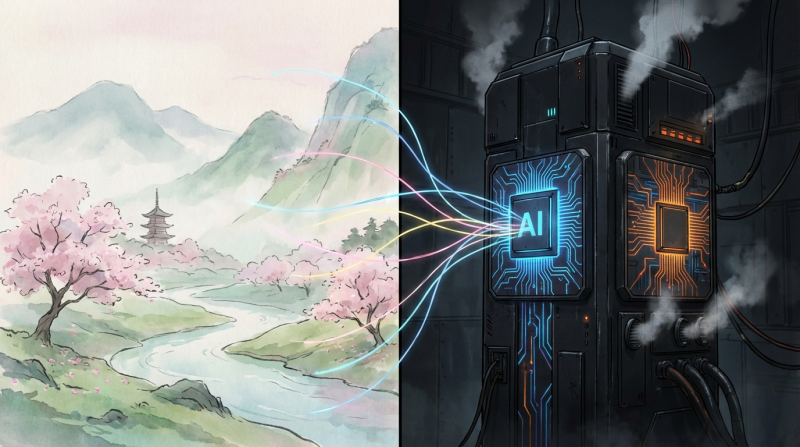

OpenAI and the Studio Ghibli Problem

Before anyone concludes this is a problem unique to Anthropic, let's talk about OpenAI.

In late February, OpenAI shipped a feature allowing users to generate images in the style of Studio Ghibli. Lush watercolour landscapes. Distinctive character proportions. The specific visual language that Hayao Miyazaki spent fifty years developing by hand, frame by frame, as an explicit rejection of mechanical reproduction of any kind.

Miyazaki's position on AI-generated art has been on the record for years. In a widely circulated 2016 interview — well before the current wave — he watched an AI animation demo and called it "an insult to life itself." He was not consulted about OpenAI's feature. He was not licensed. He was not paid.

OpenAI's legal position is that training on publicly available images constitutes transformative use under copyright law — and is therefore permissible. This is, word for word, the same argument that record labels successfully defeated when Napster users said they were sampling music for personal appreciation in the early 2000s. The rules of transformative use turn out to be considerably more elastic when the entity invoking them has a $157 billion valuation and a direct line to government.

The commercial dimension deserves emphasis. OpenAI didn't just train on Ghibli's visual style. It shipped a product that allows anyone to generate Ghibli-adjacent imagery commercially, at scale, on demand — without a single yen going to Studio Ghibli, its animators, or the human beings who spent careers developing that aesthetic. OpenAI charged for the feature. Ghibli received nothing.

That is not transformative use. That is a licensing agreement that nobody signed.

Hollywood's Glass House

A few weeks before all of this, Hollywood's IP lawyers were in full voice about Chinese AI video platforms — specifically Kling and Seedance — describing them as intellectual property pirates posing an existential threat to American creative industry. The language was vivid. The moral indignation was consistent.

The same studios making those arguments have, for a century, built their libraries by appropriating folk tales, public domain literature, indigenous cultural imagery, and fairy tales from traditions that predated their existence by centuries. That practice was standard. It was legal. And it was never described as theft.

More recently: the Writers Guild of America struck in 2023 in part because studios were using AI tools — trained on the scripts those same writers delivered under existing contracts — to generate story breakdowns, scene analyses, and script coverage. The writers' contracts predated AI and had never licensed their work for that purpose. They were told to accept a compromise. They did. Studios accepted the compromise and, in many cases, continued the practice.

Now those same studios want protection from Chinese platforms doing to American visual content what American studios were doing to their own writers' intellectual property eighteen months ago.

The geometry of these arguments is revealing. The party invoking IP protection is consistently the party that has already extracted what it needed from someone else's creative work, established market dominance on that foundation, and is now using legal mechanisms to prevent the next entrant from doing the same thing.

The Pattern

State it plainly, because it deserves to be stated plainly.

The companies most aggressively asserting intellectual property rights in AI are, without exception, the same companies that built their market positions using data they did not pay for. This is not a coincidence. It is a strategy — and it follows a sequence that should by now be familiar.

Phase one: move fast, scrape everything, build the model, establish dominance before the legal frameworks catch up. Phase two: once dominance is established, reframe the data acquisition question as a settled matter and pivot to protecting your own outputs. Phase three: lobby for legislation that enshrines phase two as the legal standard — ensuring that no future competitor can build the same way you did.

OpenAI is currently in phase three. Anthropic is somewhere between two and three. The legislation currently being shaped in Washington — with significant input from the major AI labs — would, if passed in its current form, make it substantially harder for new entrants to train on publicly available data at scale. Which is, entirely coincidentally, exactly how OpenAI and Anthropic were built.

The Kling and Seedance story is the same story told from the other direction. Western studios crying IP theft from Chinese video platforms are describing, precisely, what those studios spent a century doing to everyone else. The outrage is real. The self-awareness is not.

What This Actually Means

The fake accounts were deceptive. The hydra cluster architecture was designed to circumvent terms of service. Those things are true and Anthropic is right to be angry about them.

But the anger about distillation — the suggestion that training a model on Claude's outputs is inherently theft — only holds if you believe Anthropic acquired its own training data legitimately. By the standard Anthropic is applying to DeepSeek, it didn't. By that same standard, neither did OpenAI. Neither did Google. The foundation the entire industry is built on is, at minimum, morally contested and legally unresolved.

The question this raises is not whether AI companies took data to get here. Several of them clearly did, by any reasonable definition of the word. The question is whether we are going to let them write the laws that define what taking data means next.

Because if we do, we will have built the most sophisticated intellectual property moat in the history of technology — constructed entirely from other people's intellectual property — and handed the keys to the people who got there first.

The meme wrote itself.

Anthropic handed it the pen.

Bangkok8 AI covers the AI stories that the companies involved would prefer you didn't read too carefully.